Putting scientific results in perspective: Improving the communication of standardized effect sizes

Abstract

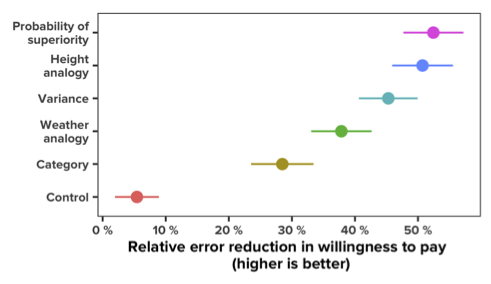

How do people form impressions of effect size when reading scientific results? We present a series of studies on how people perceive treatment effectiveness when scientific results are summarized in various ways. We first show that a prevalent form of summarizing results—presenting mean differences between conditions—can lead to significant overestimation of treatment effectiveness, and that including confidence intervals can exacerbate the problem. We attempt to remedy potential misperceptions by displaying information about variability in individual outcomes in different formats: statements about variance, a quantitative measure of standardized effect size, and analogies that compare the treatment with more familiar effects (e.g., height differences by age). We find that all of these formats substantially reduce potential misperceptions and that analogies can be as helpful as more precise quantitative statements of standardized effect size. These findings can be applied by scientists in HCI and beyond to improve the communication of results to laypeople.